Designing Multi-Camera Edge Robots: Where Synchronization, Bandwidth and Thermal Limits Break Systems

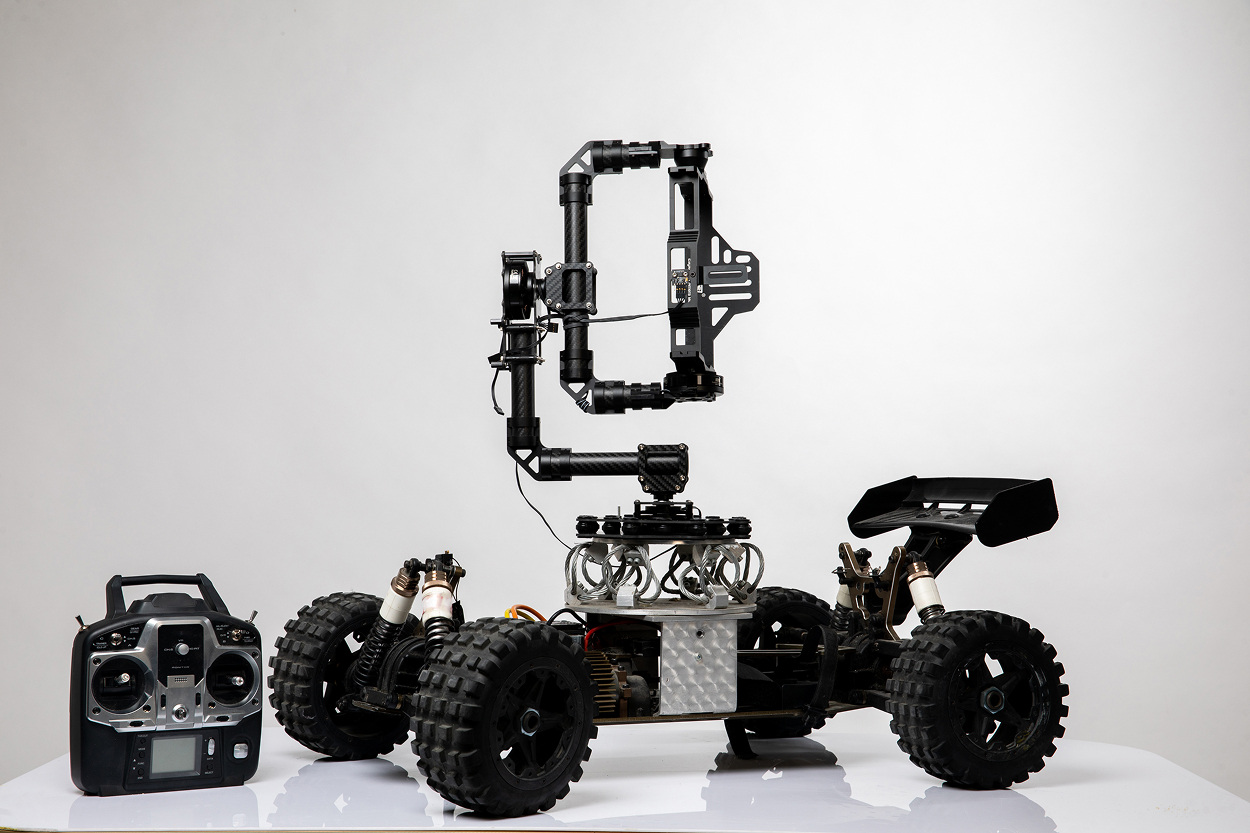

Multi-camera perception is one of the first points where edge robotics systems stop scaling linearly and start failing in complex, coupled ways. Adding cameras is often treated as a straightforward improvement, more viewpoints, more data, better perception. In practice, each additional camera increases pressure on three shared system dimensions: timing alignment, data movement, and thermal envelope. These dimensions are tightly coupled, and once one of them is pushed beyond its limit, the others follow.

A multi-camera robot is not a collection of independent sensors. It is a synchronized measurement system where spatial correctness depends on temporal alignment, where perception accuracy depends on memory throughput, and where sustained performance depends on thermal stability. The engineering challenge is not enabling multiple streams, but keeping the entire system within a bounded operating region under worst-case conditions.

Synchronization Is Not a Feature, It Is a Constraint Boundary

Camera synchronization is often described in simplified terms as “triggering all sensors at the same time.” In real systems, synchronization is not a binary property but a continuous error budget that must be managed across multiple layers. Even when cameras share a trigger signal, the moment when useful data becomes available differs due to sensor physics, interface latency, and software buffering.

At the sensor level, exposure timing defines the reference point. Global shutter sensors capture all pixels simultaneously, while rolling shutter sensors introduce a time gradient across the frame that can reach several milliseconds depending on resolution and readout speed. In a moving scene, this alone creates internal distortion before any inter-camera misalignment is considered.

After exposure, the sensor performs readout, which can take from hundreds of microseconds to several milliseconds. Serialized interfaces such as GMSL or FPD-Link add deterministic but non-zero transport latency, typically in the range of tens to hundreds of microseconds. On the host side, drivers introduce buffering, and DMA transfers into memory are subject to arbitration delays.

The result is that even with perfect trigger alignment, frame availability across cameras is skewed. In a dynamic environment, this skew directly translates into spatial error. For example, an object moving at 5 m/s introduces a 5 mm displacement per millisecond. A 4 ms misalignment between cameras results in a 2 cm positional error, which is enough to corrupt stereo disparity or multi-view fusion.

Software synchronization attempts to compensate by timestamping frames and aligning them in time. However, this introduces buffering. To match frames within a ±1 ms window, the system must hold incoming frames until corresponding frames from other cameras arrive. This increases end-to-end latency.

The synchronization problem therefore becomes a bounded trade-off. Reducing temporal error increases buffering and latency. Reducing latency increases temporal misalignment. The acceptable balance depends on application requirements, but it must be explicitly defined and enforced.

Data Volume Scales Linearly, Bandwidth Does Not

The most immediate scaling effect in multi-camera systems is data volume. A single 1920×1080 stream at 30 fps generates approximately 1.5 Gbps of raw data. Four cameras produce around 6 Gbps, and eight cameras exceed 12 Gbps. Higher resolutions, such as 4K, multiply these numbers by four.

However, the real issue is not raw input but cumulative data movement inside the system. Each frame typically passes through multiple stages: capture, image signal processing, memory storage, AI inference, and post-processing. Each stage may read and write the same data, effectively multiplying bandwidth demand.

A simplified internal data path looks like this:

- sensor → ISP (on-chip or external)

- ISP → DRAM (write)

- DRAM → NPU/GPU (read)

- NPU/GPU → DRAM (write results)

- DRAM → CPU (read for post-processing)

Even if the input stream is 6 Gbps, total memory traffic can easily exceed 20–30 Gbps due to multiple passes. On embedded platforms with LPDDR4 or LPDDR5 memory, available bandwidth may be in the range of 20–50 GB/s. While this seems sufficient, it is shared with all system components.

The critical point is that bandwidth is not reserved. CPUs, GPUs, NPUs, and DMA engines compete for access. When aggregate demand approaches capacity, latency increases sharply due to queuing and arbitration delays. Unlike CPU utilization, which degrades gradually, memory contention introduces sudden performance cliffs.

Memory Contention Propagates Into Timing Instability

Memory contention does not just slow down processing, it introduces variability. Access latency depends on queue depth, which depends on system load. This variability propagates into execution time, turning predictable pipelines into non-deterministic ones.

For example, an AI inference task that normally completes in 12 ms may occasionally take 18 ms when memory is saturated. If multiple camera streams are processed concurrently, these delays can overlap, creating bursts of latency that exceed system budgets.

This directly affects synchronization. When processing latency varies between streams, alignment becomes inconsistent. Frames that were captured simultaneously may be processed at different times, breaking temporal coherence.

Control systems that depend on perception outputs are particularly sensitive to this effect. Variable latency introduces jitter in perception-to-control pipelines, which can destabilize feedback loops.

Pipeline Backpressure: Where Latency Turns Into Data Loss

Multi-camera pipelines are inherently staged. Each stage processes data at a certain rate, and the overall system is limited by the slowest stage. When downstream stages cannot keep up, backpressure builds.

Backpressure manifests as buffer growth. Frames accumulate in queues, increasing latency. When buffers reach capacity, frames must be dropped. The system transitions from latency-bound to loss-bound behavior.

Frame drops are not random. They tend to occur in bursts when load exceeds capacity. This creates discontinuities in data streams, which are particularly damaging for algorithms that rely on temporal continuity, such as SLAM or tracking.

Backpressure is often triggered by transient conditions:

- increased scene complexity (more objects → heavier inference)

- simultaneous processing of multiple high-resolution streams

- background tasks consuming shared resources

Because these conditions are dynamic, systems that appear stable in controlled tests may fail in real environments.

Interaction with AI Workloads: Throughput vs Determinism

AI inference introduces a fundamental conflict between throughput and determinism. NPUs and GPUs are optimized for high throughput, processing large batches of data efficiently. However, their execution time is not strictly bounded.

Inference latency depends on model architecture, input size, and resource availability. Even small variations in memory access patterns or cache behavior can change execution time. When multiple streams are processed, scheduling becomes complex, and latency variability increases.

In multi-camera systems, inference is often applied to each stream independently. This multiplies compute demand and increases contention. To manage this, systems decouple capture and inference. Frames are queued and processed asynchronously, allowing the system to maintain throughput.

However, asynchronous processing introduces temporal misalignment. Inference results correspond to different capture times, and aligning them requires additional buffering and interpolation.

The system must therefore choose between:

- synchronous processing → better alignment, lower throughput

- asynchronous processing → higher throughput, more alignment error

Neither option is universally correct. The choice depends on application tolerance for latency and error.

Thermal Limits Define Sustained Performance

Thermal constraints are often underestimated because they do not appear in short tests. A system may handle peak load for a few seconds but fail under sustained operation.

Processing multiple camera streams and running AI inference generates significant power dissipation. In compact edge robots, cooling is limited to passive solutions or small fans. Heat accumulates, raising component temperature.

As temperature increases, processors reduce frequency to avoid damage. This thermal throttling increases processing time, which increases latency. Increased latency leads to buffer growth and backpressure, which further increases load. The system enters a feedback loop.

Thermal behavior depends on:

- ambient temperature (e.g., 40–60°C in industrial environments)

- enclosure design and airflow

- workload duration and intensity

Designing for thermal stability requires analyzing worst-case scenarios, not average conditions. This includes running all cameras at full resolution, executing maximum AI workload, and operating at high ambient temperature.

Combined Failure Mode: A System-Level Cascade

The most important aspect of multi-camera systems is that failures are rarely isolated. A typical failure sequence begins with increased workload, such as a complex scene requiring more AI processing.

Inference time increases, raising memory bandwidth usage. Memory contention increases latency, causing buffers to fill. As buffers grow, end-to-end latency increases, and synchronization degrades.

At the same time, increased power consumption raises temperature. Thermal throttling reduces processing speed, further increasing latency. The system crosses a threshold where it can no longer sustain real-time operation.

Perception outputs become inconsistent or delayed. Downstream systems receive incorrect or stale data. The robot may continue operating but with degraded behavior, or it may fail entirely.

This cascade illustrates that synchronization, bandwidth, and thermal limits must be treated as a single system problem.

Designing for Stability: Practical Constraints and Trade-offs

Robust multi-camera systems are designed by constraining the problem rather than maximizing performance. This involves defining explicit budgets and ensuring that all components operate within them.

Synchronization must be bounded within a defined error window, typically on the order of hundreds of microseconds for high-precision systems. This requires combining hardware triggers with precise timestamping and controlled buffering.

Memory bandwidth must be budgeted with margin. Engineers must account for worst-case data movement, including multiple passes through memory. Techniques such as zero-copy pipelines and direct memory access reduce overhead but require careful design.

Thermal design must ensure that the system can sustain peak workload indefinitely. This may require reducing resolution, limiting frame rate, or selecting more efficient hardware.

Workloads must be scheduled to avoid peak overlap. For example, processing of different camera streams can be staggered to reduce instantaneous bandwidth demand.

In practice, the most effective systems are those that operate below maximum capacity, leaving headroom for variability.

Quick Overview

Multi-camera edge robots rely on tightly synchronized sensing pipelines that are constrained by memory bandwidth and thermal limits.

Key Applications

Autonomous robots, SLAM systems, multi-view perception

Benefits

Improved coverage and redundancy in perception

Challenges

Synchronization accuracy, bandwidth saturation, thermal stability

Outlook

Hardware and system-level optimization to support higher sensor density and sustained performance

Related Terms

computer vision, SLAM, edge AI, synchronization, memory bandwidth

Our Case Studies