Securing Humanoids: Why FPGA + TPM + PQC Is Becoming the Reference Architecture

Solution Architect

at Promwad

Humanoid robots are leaving controlled lab environments and entering the real world — warehouses, hospitals, hotels, public spaces. As they do, the industry conversation has rightly focused on safety: how to prevent collisions, limit force, ensure fail-safes. But there is a less discussed problem that deserves equal attention: cybersecurity.

A humanoid can be perfectly safe in isolation. Connect it to a network — and a single exploited vulnerability in its operating system, middleware, or AI stack can turn that safe robot into a liability. Denial of service, hijacked actuators, intercepted sensor data — the attack surface of a walking, camera-equipped, network-connected machine is vast and largely uncharted.

This was the central topic of a recent security seminar hosted by Lattice Semiconductor together with Promwad and SEALSQ, where we examined the technologies needed to make humanoids not only safe but genuinely secure. Below is a distilled overview of the architectural approach we discussed — and why it matters for anyone designing autonomous systems today.

The Problem: Software-Only Security Is Not Enough

A critical weakness in most robotic platforms is that safety logic lives in software. If the OS, the ROS middleware, or the AI inference layer is compromised, software-based safety mechanisms can be bypassed entirely. For a humanoid operating in close proximity to people, this is not an abstract risk — it is a design flaw.

What the industry needs is a hardware-rooted security architecture where physical safety guarantees cannot be overridden by software, where the platform can prove its own integrity, and where cryptographic protections remain valid not just today but over the 15–20 year service life of the machine.

Three technologies, when combined, address these requirements: FPGA, TPM, and post-quantum cryptography (PQC).

Photo: The iCub Production Lab of CRIS, Italy (CC BY 4.0)

FPGA: The Hardware-Level Safety Supervisor

An FPGA (Field-Programmable Gate Array) operates below the OS layer. It sits between the sensors, the CPU, and the actuators — and it cannot be bypassed by software. Even if the entire software stack is compromised, the FPGA continues to enforce safety constraints at the hardware level.

This matters for several reasons:

- First, FPGAs provide deterministic, real-time response — typically under one microsecond. In safety-critical applications, there is no room for the variable latency that comes with CPU-based processing.

- Second, the inherently parallel architecture of an FPGA enables simultaneous processing of multiple sensor streams: vision, lidar, IMU, temperature — each on its own timeline, all cross-validated in hardware. Techniques like hardware voting logic, dual safety checks, and sanity verification of incoming sensor data can all run in parallel, making decisions faster and more reliably than any sequential processor could.

At Promwad, we apply this pattern in practice. In a recent project for a global OEM, we built an onboard safety platform for rail transport that combines an FPGA-based pre-processing layer with an edge AI computing module. The FPGA handles real-time ingestion and filtering of dense multi-sensor streams, while the application layer runs AI-driven under Embedded Linux.

The same architectural principle — FPGA as a deterministic hardware supervisor beneath the software stack — translates directly to humanoid robotics.

Photo: Berthold Bäuml, Researcher at the Institute of Robotics and Mechatronics, Germany, working with the humanoid robot Agile Justin (CC BY 4.0)

TPM: The Root of Trust

An FPGA guarantees that safety logic runs correctly in real time. But how do you know the right bitstream was loaded onto it in the first place? How do you verify that the firmware, the OS, and the application layer have not been tampered with?

This is the role of a Trusted Platform Module (TPM) — a dedicated secure chip that provides tamper-resistant key storage, cryptographic operations, and platform attestation. With over four billion TPMs deployed worldwide, this is mature, well-understood technology governed by the Trusted Computing Group standard.

In an FPGA-based system, the TPM verifies the bitstream before it is loaded, validates the firmware stack from BIOS through OS to application layer, and provides secure key storage for authentication and encrypted communications. It also enables secure command and control — establishing TLS connections, managing PKI certificates, and ensuring that external connectivity does not become an attack vector.

For humanoids specifically, TPMs solve a practical problem: these machines will operate in physically accessible environments, far from the guarded perimeters of a data center. Anyone can potentially touch, probe, or tamper with them. A TPM provides a hardware-anchored chain of trust that resists physical and logical attacks alike.

The analogy with drones is instructive. Autonomous drones face similar security requirements — platform integrity, secure boot, encrypted command channels — and TPM-based architectures are already deployed there. Humanoids represent a natural extension of the same approach.

PQC: Protecting Data Over a 20-Year Horizon

Here is a dimension that many robotics teams have not yet considered: a humanoid robot purchased today may still be in service in 2045. Think of it as an appliance — like a refrigerator, but one that collects video, audio, biometric data, and location information continuously.

Photo: Jinoh Lee, Researcher at the Institute of Robotics and Mechatronics, Germany, working with the humanoid walking robot TORO (CC BY 4.0)

The cryptographic algorithms that protect this data today — RSA, elliptic curve cryptography — will be broken by cryptographically relevant quantum computers. The exact timeline is uncertain, but the regulatory direction is clear.

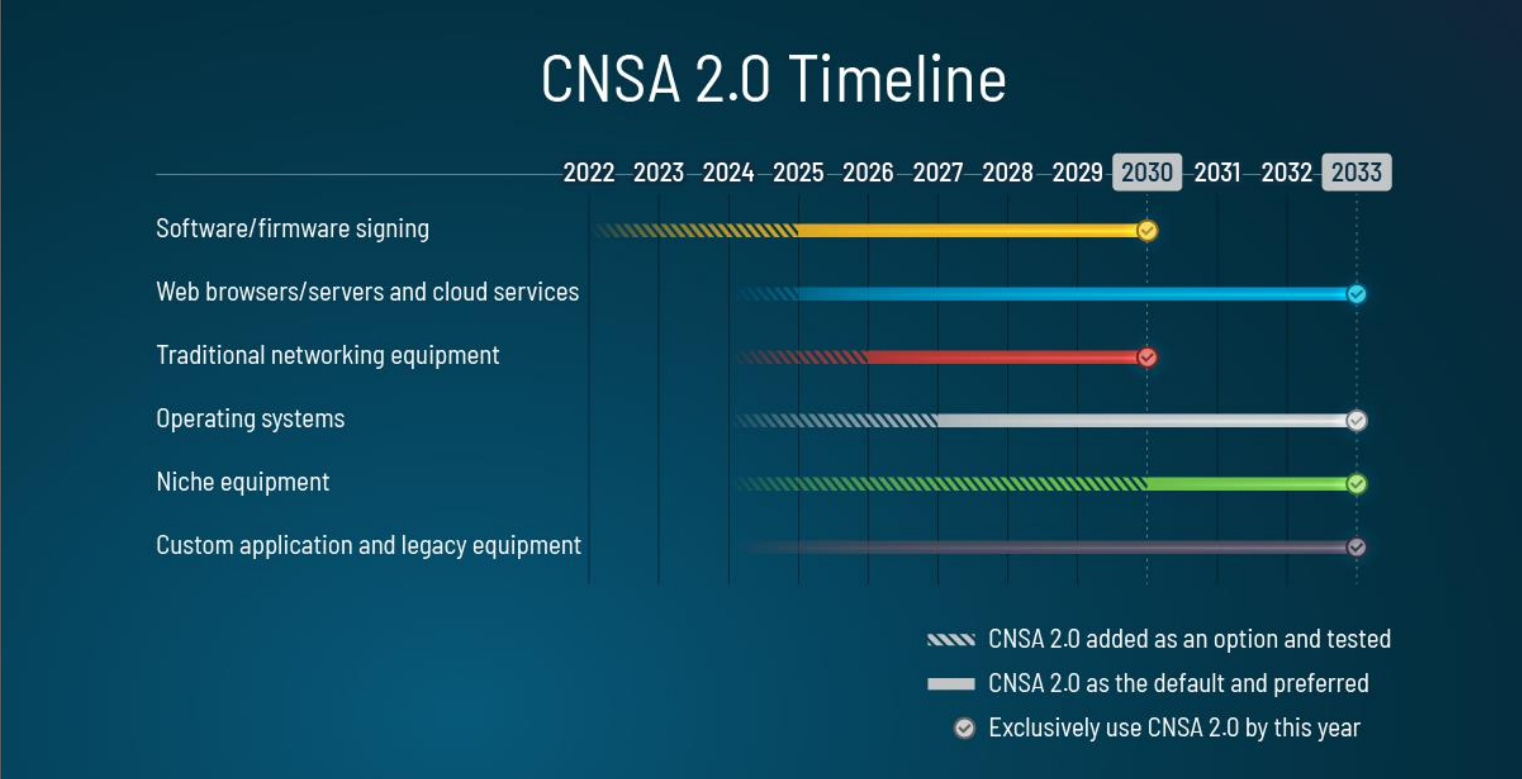

US Timeline

Post-quantum cryptography (PQC) is recommended now by NIST and the White House through policies like NSM-10 and OMB M-23-02, requiring immediate inventories and planning. According to quantum.gov and NIST guidelines, PQC implementation for sensitive systems must begin no later than 2026 with phased migration. Full deployment targets 2033-2035 for federal and national security systems under CNSA 2.0 and NSM-10.

Image: US transition timeline, NSA | Commercial National Security Algorithm Suite 2.0

Europe Timeline

PQC preparation is recommended immediately, with EU roadmaps calling for national strategies by end-2026 and pilots in 2025-2026. High-risk critical infrastructure must transition by 2030, while full deployment aims for 2035 where feasible.

UK NCSC guidance sets discovery by 2028, high-priority migration by 2031, and completion by 2035.

The "harvest now, decrypt later" threat makes this urgent: adversaries can capture encrypted data transmissions today and decrypt them once quantum computers mature. For a humanoid that serves as, in effect, a walking surveillance platform, the volume of sensitive data at risk is substantial.

Post-quantum cryptography addresses this by securing bitstream signing, firmware updates, over-the-air communications, and identity verification against future quantum attacks. And because PQC algorithms are still maturing, crypto agility — the ability to swap algorithms without redesigning the hardware — becomes essential. This is another area where FPGAs excel: their reconfigurable nature means cryptographic implementations can be updated in the field as standards evolve.

The Triangle: How the Three Technologies Work Together

Each technology addresses a specific layer of the security problem:

- FPGA guarantees physical safety at the hardware level. It enforces real-time constraints, performs sensor fusion, and runs safety logic that software cannot override.

- TPM ensures platform trust. It verifies that only authenticated firmware and bitstreams are loaded, stores cryptographic keys securely, and enables the humanoid to prove its integrity to external systems.

- PQC provides long-term data protection. It secures communications and identity against quantum threats over the full service life of the machine.

Together, they create a trusted, deterministic, and future-proof security architecture — one where safety is guaranteed by hardware, trust is anchored in a proven standard, and cryptographic protection extends decades into the future.

From Concept to Hardware: Joint FPGA + TPM + PQC Demo

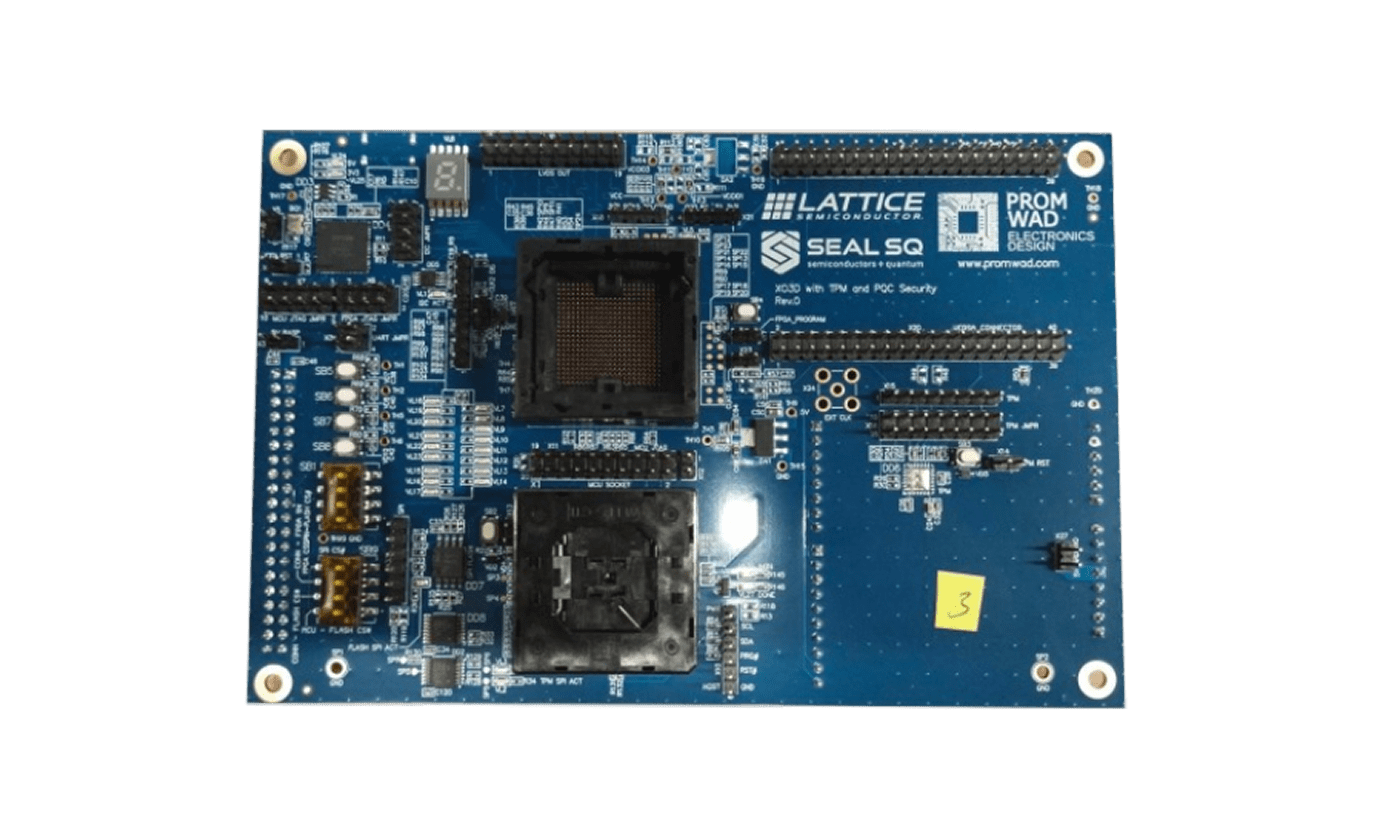

This architecture is not theoretical. At Embedded World 2026, Promwad presented a joint hardware demonstrator with Lattice Semiconductor and SEALSQ that brings the FPGA + TPM + PQC triangle to life on a single platform.

The demo features PQC-signed FPGA bitstream validation, an integrated Root of Trust with TPM, and a PQC crypto co-processor accessible directly from the FPGA fabric.

The demonstrator addresses a concrete industry problem: devices in robotics, drones, and critical infrastructure stay in service for 10–20 years, while security requirements, attack methods, and regulations — such as the EU Cyber Resilience Act and the Radio Equipment Directive — keep evolving. A static security model cannot keep up.

The platform shows how security can remain hardware-anchored while cryptographic implementations stay updatable — balancing performance, compliance, and cryptographic evolution in a single design.

Promwad's contribution focused on the hardware implementation: schematic capture, PCB layout, and release to manufacturing — the kind of engineering work we deliver every day for product companies turning complex security requirements into production-ready hardware.

What This Means for R&D Leaders

If you are designing an autonomous system today — humanoid, drone, mobile robot, or industrial platform — the message is straightforward: security must be a design-time decision, not a retrofit. Bolting on security after the architecture is set introduces vulnerabilities that are expensive or impossible to fix later.

Start with a hardware root of trust. Use an FPGA for deterministic safety enforcement. Plan for post-quantum cryptography from day one. And leverage proven standards like TPM rather than inventing proprietary solutions that may carry undetected security flaws.

The technologies exist. The regulatory pressure is building. The question is whether your platform will be ready.

Promwad is an independent electronics design house with 150+ engineers specialising in hardware and software development for safety-critical and security-sensitive systems. To discuss how FPGA, TPM, and PQC can be integrated into your platform architecture, contact our team.

This article is based on insights from a joint security seminar with Lattice Semiconductor and SEALSQ. Watch the full recording →